Understanding AI Decision-Making in Multi-Agent Environments

Why Multi-Agent AI Decisions Matter

Imagine a city where hundreds of autonomous agents navigate busy intersections, collaborative AI fleets deliver packages, and AI trading bots make split-second financial decisions. All of these systems operate in multi-agent environments, where AI decision-making frameworks determine not just individual actions, but collective outcomes.

Understanding AI decision-making in multi-agent systems isn’t just technical—it’s vital for safety, efficiency, and trust. According to recent studies, the global market for AI multi-agent systems is projected to exceed $15 billion by 2030, highlighting the growing reliance on collaborative AI across industries.

With this growth comes complexity: as autonomous agents interact, analyzing AI decisions in multi-agent environments becomes critical for transparency, accountability, and ethical governance.

Understanding Multi-Agent Environments

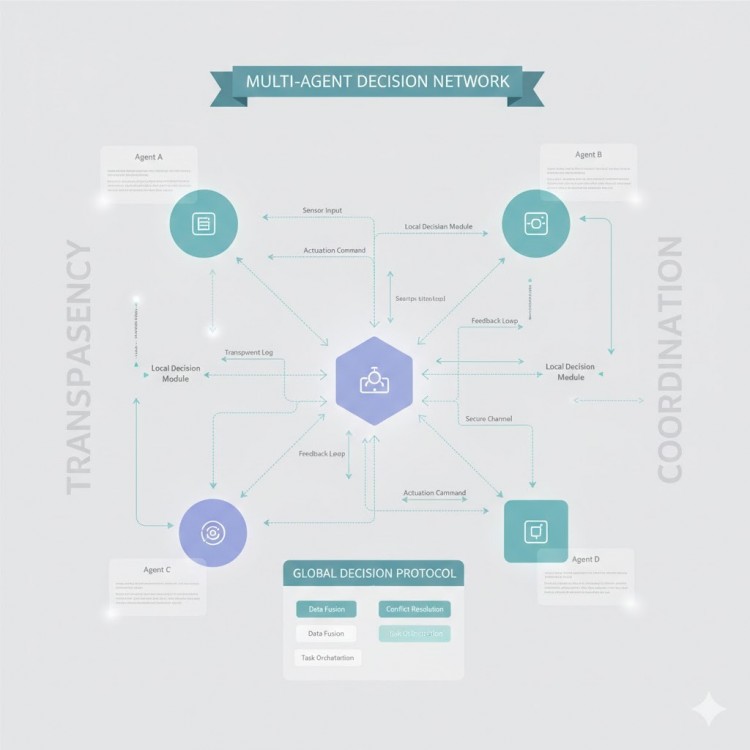

A multi-agent system (MAS) is any network where autonomous AI agents interact, compete, or collaborate to achieve objectives. Unlike single-agent systems, AI multi-agent environments introduce interdependencies that require sophisticated AI coordination frameworks.

Real-world examples include:

- Autonomous vehicle fleets negotiating traffic patterns in real-time using AI decision-making frameworks.

- Financial AI trading systems where multiple bots compete and cooperate to optimize market positions.

- Smart energy grids, managed by AI multi-agent systems, balancing supply and demand efficiently.

- Collaborative robotics, where autonomous agents coordinate production tasks in smart factories.

By understanding AI multi-agent scenarios, engineers and researchers can design systems that maximize efficiency and minimize errors.

How AI Makes Decisions in Multi-Agent Systems

AI decision-making in multi-agent environments relies on a blend of algorithms and learning strategies:

- Rule-Based Systems: Deterministic AI systems for predictable environments.

- Reinforcement Learning (RL): Autonomous agents learn optimal strategies through feedback loops in multi-agent simulations.

- Game-Theory Approaches: AI agents evaluate others’ potential moves to optimize outcomes in competitive environments.

Example: In autonomous traffic systems, collaborative AI agents negotiate intersections. Each agent applies AI decision-making frameworks to predict others’ actions and avoid collisions, ensuring safe and efficient operations.

4. The Challenge of Understanding AI Decisions

Even advanced AI multi-agent systems can produce unpredictable outcomes, which is why explainable AI in multi-agent systems is critical.

- Ensures transparency and accountability in AI decision-making frameworks.

- Reduces operational errors in autonomous agent interactions.

- Addresses ethical concerns such as bias, fairness, and responsibility in collaborative AI systems.

Techniques include:

- Visualizing agent decision pathways in AI simulation platforms

- Post-hoc explanations of AI agent actions

- “What-if” analyses to explore alternative decisions

For example, a drone delivery system can show real-time reasoning of autonomous agents using AI multi-agent simulations, improving trust and operational safety.

Tools & Platforms That Make AI Decisions Easier to Understand

Modern AI agent development platforms enable developers to design multi-agent systems with transparency and participatory oversight:

- Tools that simplify prototyping, testing, and debugging autonomous agents.

- A platform for creating, simulating, and visualizing collaborative AI agents, ideal for testing AI decision-making frameworks.

- Benefits:

- Rapid iteration of AI multi-agent scenarios

- Improved AI transparency and ethical design

- Integration of human feedback for safer autonomous agent coordination

These tools make it easier to implement explainable AI in multi-agent systems, reducing errors and improving user trust.

6. Evaluating Multi-Agent AI Performance

AI multi-agent systems require specialized metrics:

- Task efficiency: Success rate of achieving objectives.

- Cooperation rate: Effectiveness of collaborative AI agents working together.

- Conflict resolution: Ability to handle competing priorities.

- Safety and reliability: Ensuring ethical and secure operations.

Evaluation is often done in AI simulation platforms before deployment. Feedback loops enable autonomous agents to adapt and improve within multi-agent environments, enhancing transparency and operational efficiency.

Real-World Success Stories

Multi-agent AI is revolutionizing industries:

- Autonomous vehicle fleets: Collaborative AI agents manage traffic efficiently.

- Collaborative robotics: Autonomous agents coordinate in factories, improving production speed by 40%.

- Financial trading bots: AI multi-agent systems optimize strategies while managing risk.

- Smart energy grids: AI agent development platforms balance supply and demand in real-time.

These examples highlight the tangible benefits of AI agent builders for developing high-performing, transparent, and ethical multi-agent systems.

The Future of AI in Multi-Agent Scenarios

Future trends include:

- Hybrid human-AI teams for complex decision-making.

- Increasing adoption of ethical AI frameworks in multi-agent environments.

- Wider use of AI agent development platforms for scalable simulations.

- Advanced explainable AI in multi-agent systems to enhance trust and reliability.

The future of AI multi-agent systems will rely on collaborative, transparent, and ethical AI to create safer, more efficient, and human-centered solutions.

AI That Learns, Collaborates, and Explains

AI decision-making in multi-agent scenarios is transforming industries. By using RubikChat, developers can design autonomous agents that are transparent, reliable, and ethical.

Explainable AI in multi-agent systems ensures stakeholders understand decisions, fostering trust and adoption. The next generation of collaborative AI will not only make intelligent decisions but also communicate reasoning, enabling humans to work alongside autonomous agents safely and effectively.